Most teams building Solana AI agents focus on the intelligence layer—the LLM, the framework, the strategy logic. The infrastructure is treated as a commodity of second-grade importance. It is not.

Three failure modes account for the majority of agent underperformance in production:

- Stale data. Your agent reads the account state that is 2–3 slots behind the network tip. On Solana, that is 800–1,200ms of lag. The opportunity your agent is acting on no longer exists.

- Transaction drop rate. During congestion, shared RPC endpoints rate-limit and drop transactions. An agent that fires 100 transactions and lands 40 is not an agent. It is an expensive random number generator.

- Subscription failures. WebSocket streams drop. Reconnection takes 5–10 seconds. Your agent is blind during the worst possible window.

They are the default behavior of commodity infrastructure under load. The gap between a shared public endpoint and a dedicated colocated node translates directly to P&L.

What "low-latency" actually means on Solana

On Ethereum, latency is counted in seconds. On Solana, it is calculated in slots, and one slot is 400ms. Low-latency on Solana means:

- Data freshness: Your node is at the network tip, zero slots behind.

- Submission speed: Transaction reaches the current slot leader before the slot closes.

- Shred-level access: You see block data as it is produced, not after it propagates through gossip.

The benchmark that matters is not average latency. It is p99. During a normal session, a shared endpoint and a dedicated bare-metal node look similar. During a memecoin launch or a liquidation cascade, the shared endpoint begins to miss slots. The bare-metal node continues to run at 40ms.

According to Dysnix's open benchmark comparing Jito ShredStream against Yellowstone gRPC across 2,078,707 matched transactions:

- ShredStream delivered data first in 64.5% of cases

- Average time advantage: 32.8ms

- Maximum time advantage: 1,323ms

That 32ms average is the difference between landing a copy-trade in the same slot and arriving one slot late. For MEV, it is the difference between profit and the value of a failed bundle.

Architecture overview

A production low-latency stack for a Solana AI agent has five layers. Each one has a specific job. A failure in any layer degrades the whole system.

Each layer feeds the next, either with the legacy of errors or by reducing latency. Your agent's decision quality is only as good as the data it receives. Its execution quality is only as good as the submission path it uses.

Step 1: Build the data layer

Your agent reads the state before it acts. The quality of that read determines whether the action is valid.

The problem with polling

Standard getAccountInfo calls over JSON-RPC are synchronous, rate-limited, and slow. An agent polling every 100ms is already behind a bot using push-based streaming.

Use Yellowstone gRPC

Yellowstone gRPC (Dragon's Mouth) delivers account updates, transaction data, and slot changes directly from validator memory—before the data is serialized through the standard RPC layer. Key advantages over WebSocket:

Set up server-side filters. Subscribe only to the accounts and programs your agent needs—specific AMM pools, lending protocol positions, target wallets. Filtering at the source eliminates wasted bandwidth and CPU overhead in your agent pipeline.

Add ShredStream for the earliest signal

Jito ShredStream delivers raw transaction shreds from the slot leader before full block propagation. For copy-trading bots that need same-slot execution, or MEV searchers targeting sub-slot arbitrage, ShredStream is the earliest possible data source on Solana.

The Dysnix benchmark above shows ShredStream arriving first in 64.5% of cases with a 32.8ms average advantage. In a copy-trading context, that window is the difference between mirroring a KOL trade in the same slot and arriving one slot late.

Step 2: Configure the RPC layer

Your RPC node is the nervous system of your agent. Every state read, every transaction submission, every subscription flows through it.

Why public endpoints fail under load

Solana's public endpoints (api.mainnet-beta.solana.com) are shared across thousands of users, rate-limited, and geographically distant from most validators. During high-congestion events, they degrade first. Your agent's transactions fail.

Dedicated vs. shared: The decision matrix

For latency-sensitive strategies, the dedicated path is not optional. A shared cloud VM introduces unpredictable latency spikes caused by other tenants competing for the same CPU and memory. Under Solana's constant throughput, those spikes show up at exactly the wrong moment.

RPC method requirements by strategy

Not every agent needs the same RPC surface. Match your node tier to your actual call pattern:

- Yield optimization / DeFi automation:

getAccountInfo,simulateTransaction,getLatestBlockhash—runs on a Light node (512GB RAM) - Copy-trading / pool monitoring:

getProgramAccounts,getTokenAccountsByOwner—requires Medium tier (768GB–1TB RAM) - MEV / custom indexing: unrestricted method access, custom plugins—Pro tier (1–1.5TB RAM)

RPC Fast's dedicated node tiers map directly to this breakdown, with Jito ShredStream and Yellowstone gRPC included by default across all tiers.

Step 3: Optimize the execution layer

Your agent made the right call. Now it needs to land the transaction in the current slot—not the next one.

How Solana transaction inclusion works

Transactions reach the slot leader via RPC or relays, get queued, and are included if they arrive with a fresh blockhash and sufficient priority. The leader changes every four slots (~1.6 seconds). Miss the window, and you’ll have to retry with expiry risk and wasted fees.

Use Jito bundles for guaranteed order execution

Jito's block engine accepts bundles of up to five atomically executed transactions with an attached SOL tip. With ~92% of Solana validators by stake running the Jito client, submitting through Jito gives your bundle the highest probability of reaching the current leader.

For arbitrage agents: bundle your buy and sell instructions atomically. The bundle either executes in full or not at all—no partial fills, no sandwich exposure.

Tip calibration matters. Arbitrage bots on Solana typically pay 50–60% of extracted profit in validator tips. Calibrate dynamically based on competition level. Overpaying on low-competition slots burns margin. Underpaying on high-competition slots means your bundle gets skipped.

Add bloXroute for relay diversity

bloXroute's BDN routes transactions through purpose-built relay nodes rather than the standard gossip network. Its primary advantage over Jito is geographic diversity—the BDN covers edge cases where the current slot leader is in a region where Jito's direct validator ties are weaker.

In October 2025, bloXroute introduced leader-aware submission that scores current and upcoming slot leaders in real time and dynamically adjusts submission paths—delaying or skipping high-risk leaders while accelerating through trusted ones.

The practical approach: submit to both Jito and bloXroute in parallel. The marginal cost is negligible. The improvement in inclusion rate is not.

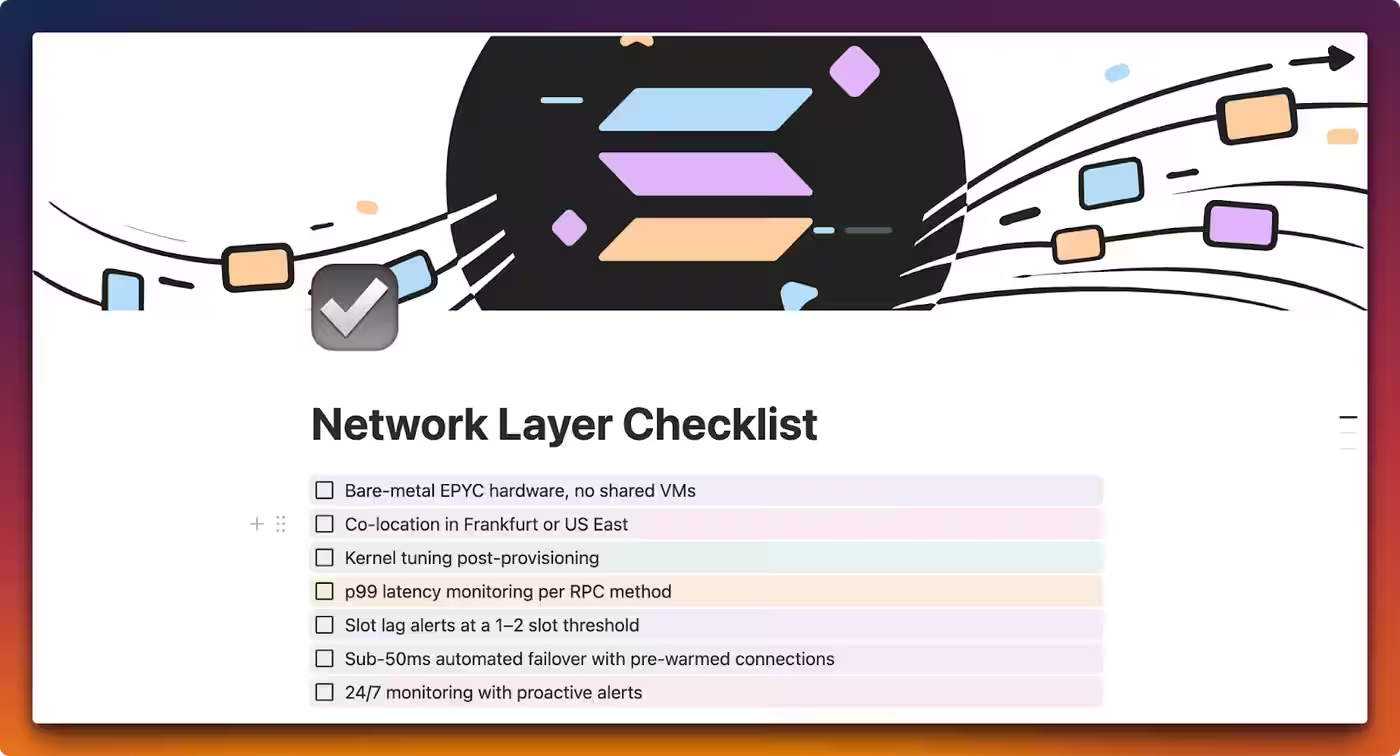

Step 4: Harden the network layer

The fastest data feed and the best submission path mean nothing if your node goes offline during a volatile market event. Five minutes of downtime during a liquidation cascade costs more than a month of infrastructure.

Hardware baseline

Bare-metal is not optional for serious execution. Shared cloud VMs introduce noisy-neighbor latency—unpredictable spikes caused by other tenants competing for CPU, memory, and network resources. Under Solana's constant throughput, those spikes show up as slot lag at exactly the wrong moment.

The dominant hardware choice for MEV-optimized setups: AMD EPYC 9355 or EPYC 9005 series. High single-thread performance, large L3 cache, SHA and AVX2 extensions. 512GB–1.5TB DDR5 RAM, depending on RPC method requirements. NVMe SSD storage—Solana generates ~1TB of new data per day, and slow I/O translates directly to slot lag.

Colocation strategy

The highest concentration of high-stake Solana validators runs in US East Coast data centers (Ashburn, VA; New York Metro) and Western Europe (Frankfurt, Amsterdam). Co-locating your bot and RPC node in the same physical data center as a high-stake validator eliminates geographic hops between your state reader, your bundle submitter, and the validator that includes your transaction.

Same-DC colocation saves 5–15ms per request. LAN-local RPC access drops latency from 20–100ms to sub-1ms. According to RPC Fast internal benchmarks, co-location achieves a 5–10x latency reduction compared to a remote cloud setup.

Kernel-level tuning

After provisioning, tune the node for Solana's high-throughput characteristics:

sysctladjustments for TCP buffer sizesirqbalanceconfiguration for NIC interrupt distribution- Custom eBPF filters to prioritize Solana traffic

- Optimized Turbine fanout settings

These changes reduce base network jitter and keep p99 latency flat during traffic spikes.

Monitoring and failover

Track p50, p95, and p99 latency separately per RPC method. Average latency hides tail behavior that kills trading bots. Set slot lag alerts—if your node falls more than 1–2 slots behind the cluster, your state data is stale, and your agent is acting on wrong prices.

Automated failover must be sub-50ms with pre-warmed connections. Cold-start failover takes seconds. Seconds during a volatile event mean missed opportunities and failed transactions.

Real-world cases from RPC Fast practice

Copy-trading bot, Frankfurt co-location

A Rust-based copy-trading agent co-located with an RPC Fast Frankfurt node achieved 15ms best-case transaction landing and consistently secured same-slot or +1 slot execution against KOL wallets. During 100,000-call stress tests, the node held sub-1ms response times with no rate limiting.

MEV arbitrage, ShredStream vs. Yellowstone

Across 2,078,707 matched transactions in the Dysnix benchmark, ShredStream arrived first in 64.5% of cases with a 32.8ms average advantage and a maximum advantage of 1,323ms. For sub-slot arbitrage strategies, ShredStream is the correct data source—not Yellowstone alone.

Yield optimization agent, SaaS tier

A DeFi yield agent querying getAccountInfo and simulateTransaction across Kamino and Drift ran without issue on a Light-tier dedicated node. No getProgramAccounts required. Infrastructure cost stayed below the threshold where a dedicated node makes economic sense versus SaaS.

The pattern: match your node tier to your actual RPC call pattern. Overpaying for Pro when Light covers your methods wastes budget. Underpaying and hitting method restrictions at scale breaks your agent.

Provider landscape at a glance

Build the stack, then scale the strategy

The agent layer on Solana is moving faster than most teams expect. The frameworks are stable. The strategies are proven. What separates the bots that print from the bots that bleed is the infrastructure underneath.

Start with the data layer. Get off polling. Set up Yellowstone gRPC with server-side filters. Add ShredStream if your strategy requires sub-slot data. Move to a dedicated node before you scale capital. Co-locate in Frankfurt or US East. Tune the kernel. Monitor p99, not average.

Every layer compounds. A 30ms improvement in data freshness, plus a 20ms improvement in submission latency, plus a 10ms improvement from co-location, adds up to a bot that lands trades its competitors miss.

Not sure which node tier fits your agent's call pattern?

To help you decide, the RPC Fast team offers a free 1-hour infrastructure briefing—your architecture, your strategy, and specific recommendations.

.jpg)