On January 20, 2025, bots paid 8,584 SOL in a single hour to secure blockspace on Solana

That's over $1.5 million—in sixty minutes, during the TrumpCoin launch. Not an anomaly. A perfectly rational bid for the right to be first in the block, because being second meant zero profit.

The broader 2025 picture: MEV revenue on Solana hit $720 million for the year, overtaking priority fees as the largest component of the network's real economic value. The Jito Block Engine generated $4.7 million in fees in Q3 2025 alone. MEV is no longer a niche—it's the economic engine powering validator incentives on Solana.

What most coverage misses: the searchers who captured the majority of that value weren't running the most sophisticated strategies. They were running the best infrastructure. This article breaks it down layer by layer—hardware, geography, streaming plugins, submission paths, relay diversity, and the decisions that separate a profitable MEV setup from an expensive way to generate failed bundles.

Why infrastructure determines MEV outcomes on Solana

Solana produces a new block every 400 milliseconds. That's one slot. On Ethereum, a 12-second block time gives searchers a meaningful window to observe pending transactions, calculate profitability, and submit bids. On Solana, that window is measured in single-digit milliseconds—and the slot leader changes every four slots, rotating across validators globally.

The consequence: on Solana, MEV is not won by smarter code alone. It's won by being closer to the current leader, receiving state updates faster, and submitting bundles before anyone else. This is why MEV on Solana has been described as an infrastructure arms race—and why every serious searcher eventually ends up colocating, running dedicated hardware, and optimizing every hop in the delivery chain.

| Factor | Ethereum MEV | Solana MEV |

|---|---|---|

| Block time | 12 seconds | 400 milliseconds |

| Mempool | Public (visible to all) | No global mempool—private relays only |

| Competition axis | Fee bidding | Latency + fee (infrastructure wins) |

| Bundle system | MEV-Boost / Flashbots | Jito block engine |

| Validator client MEV adoption | ~90% use MEV-Boost | ~92% stake-weighted use Jito client |

| Primary MEV revenue 2025 | ~$129M in Q2 | ~$271M in Q2—40% of all chains |

Layer 1: hardware—bare-metal is not optional for serious MEV

The starting point for every competitive MEV setup is dedicated bare-metal hardware. This is not about cost—it's about physics. When you run on a shared cloud VM, you compete for CPU cycles and memory bandwidth with other tenants on the same physical machine. Under Solana's constant throughput, those resource conflicts show up as latency spikes at exactly the wrong moment.

What the hardware stack looks like in practice

The Solana validator spec calls for a minimum of 12 cores at 2.8 GHz, but competitive MEV operators consistently deploy far beyond the minimum. Community benchmarks show that AMD EPYC processors—specifically the EPYC 9355 and EPYC 7443P series—are the dominant choice for MEV-optimized setups, owing to their high single-thread performance and large L3 cache.

- CPU: AMD EPYC 9355 or 7443P—high clock speed, SHA and AVX2 extensions required, single-thread performance over raw core count

- RAM: 512 GB minimum for validator-grade setups; MEV RPC nodes typically run 256–512 GB

- Storage: NVMe SSD, ideally enterprise-grade (Samsung PM9A3 or equivalent)—Solana generates ~1 TB of new data per day, and slow I/O translates directly to slot lag

- Network: 1–10 Gbps symmetric connection with low jitter—packet loss during high-traffic periods causes transaction retries and missed slots

The difference between a dedicated bare-metal EPYC server and a shared cloud instance isn't measured in average latency—it's measured in p99 latency. During periods of normal traffic, both may look similar. During a memecoin launch, a liquidation cascade, or a high-volume arbitrage window, the cloud VM starts missing slots while the bare-metal server keeps running at 40ms.

Layer 2: geography—the speed of light is a real constraint

Solana's 400ms slot time creates a physical boundary that no amount of clever code can overcome: the speed of light. A 150ms round-trip between your server and the nearest validator means your transaction arrives well into the current slot—or misses it entirely and waits for the next. Under congestion, that delay compounds.

Where Solana validators actually are

The Solana validator set is globally distributed, but not evenly. The highest concentration of high-stake validators runs in US East Coast data centers (Ashburn, VA; New York Metro), with secondary clusters in Western Europe (Frankfurt, Amsterdam) and East Asia (Tokyo, Seoul). The current slot leader changes every four slots—roughly every 1.6 seconds—which means your optimal geographic position shifts continuously.

Colocation strategy for MEV

The most effective setup—used by top-tier searchers—is to colocate the trading bot and the RPC node in the same physical data center as a high-stake validator, ideally on the same LAN segment. This eliminates geographic hops between the three critical components: your state reader, your bundle submitter, and the validator that includes your transaction.

- Same-DC colocation eliminates external network hops between bot and RPC—typically 5–15ms saved per request

- LAN-local RPC access removes internet routing entirely for state reads—latency drops from 20–100ms to sub-1ms

- Proximity to the current slot leader reduces submission-to-inclusion time—the closer you are, the higher your priority

- Multi-region setups use multiple colocated nodes and switch to the one nearest the current leader dynamically

Validators positioned near centralized exchange (CEX) infrastructure also gain an additional edge: they can monitor off-chain price movements on CEX order books and detect arbitrage gaps between CEX prices and on-chain DEX pools before they fully close. This CEX-DEX arbitrage is one of the most consistently profitable MEV strategies on Solana.

Layer 3: data streaming—why WebSocket alone isn't enough for MEV

Most developers start with WebSocket subscriptions for real-time Solana data. It works. But for MEV, it's a significant bottleneck. WebSocket delivers JSON-encoded messages over HTTP—readable, easy to parse, and slow relative to what's available.

Geyser plugins: what they are and why they matter

The Geyser plugin system is built directly into the Solana validator. It allows plugins to receive account updates, transaction data, slot changes, and block notifications directly from validator memory—before that data has been serialized, packaged, and shipped through the standard RPC layer. The result is a much shorter path between what happens on-chain and what your bot sees.

Yellowstone gRPC: the standard for MEV data feeds

Yellowstone gRPC (also called Dragon's Mouth) is the open-source gRPC implementation of the Geyser plugin, developed by Triton One and widely adopted across the ecosystem. It uses Protocol Buffers (protobuf) for serialization and HTTP/2 for transport—both significantly more efficient than JSON over HTTP/1.1.

| Property | WebSocket (standard) | Yellowstone gRPC |

|---|---|---|

| Protocol | JSON over HTTP/1.1 | Protobuf over HTTP/2 |

| Typical latency | 50–300ms | Sub-50ms from validator memory |

| Data source | RPC layer (post-processing) | Directly from validator memory |

| Filtering | Limited, client-side | Server-side—account, program, tx filters |

| Missed updates | Common on reconnect | from_slot parameter for replay/recovery |

| Best for | Dashboards, light monitoring | Trading bots, MEV, liquidation triggers |

Yellowstone delivers data by tapping directly into Solana leaders to receive shreds as they're produced—the same raw data the validator uses internally. This means your bot can react to state changes at sub-50ms in well-configured setups, compared to the 100–300ms that standard WebSocket subscriptions typically achieve.

Practical filtering is another major advantage. Instead of receiving every transaction and filtering client-side, Yellowstone lets you subscribe to specific accounts, programs, or transaction patterns at the server level. For a liquidation bot monitoring undercollateralized positions on a lending protocol, this means your feed contains only what you need—no wasted bandwidth, no CPU overhead parsing irrelevant data.

- Subscribe to accounts—track balance changes, data modifications, ownership events on specific addresses

- Subscribe to transactions—filter by program ID, instruction type, or involved accounts

- Subscribe to slots—track slot progress and leader changes for submission timing

- from_slot replay—recover missed events after reconnection without restarting from scratch

Layer 4: transaction submission—how bundles actually get to validators

Once your bot detects an opportunity and constructs a transaction, the submission path determines whether it lands in the current slot or misses it entirely. There are three primary paths searchers use, and the best setups combine all three.

Jito block engine and ShredStream

Jito's block engine is the default MEV submission infrastructure on Solana. With ~92% of validators by stake weight running the Jito-Solana client, submitting through Jito gives your bundle the highest probability of reaching the current leader. The bundle system allows up to five atomically executed transactions with an attached SOL tip—the validator includes the highest-paying bundles at the top of the block.

Jito ShredStream goes a step further. Instead of waiting for fully formed blocks to propagate, ShredStream delivers raw transaction shreds directly to subscribing nodes as the slot leader produces them. This gives your RPC node—and by extension your bot—access to state data 50–200ms before standard gossip propagation completes. For sub-slot arbitrage strategies, that window is everything.

bloXroute and relay diversity

bloXroute operates a global Blockchain Distribution Network (BDN) that routes transactions through purpose-built relay nodes rather than the standard Solana gossip network. Its primary advantage over Jito is geographic diversity—the BDN is designed to cover edge cases where the current slot leader is in a region where Jito's direct validator ties are weaker.

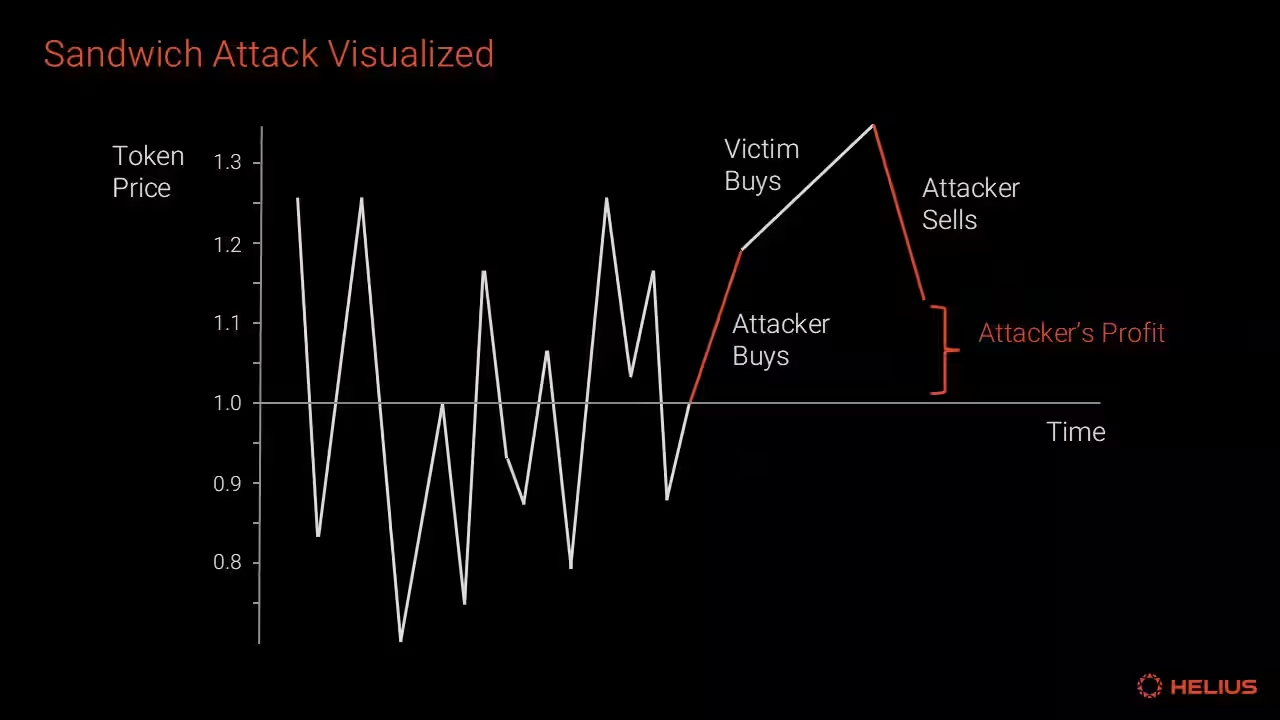

In October 2025, bloXroute introduced a leader-aware submission system that scores current and upcoming slot leaders in real time, identifies validators with elevated risk of malicious ordering (sandwich attack correlation), and dynamically adjusts submission paths—delaying or skipping high-risk leaders while accelerating submission through trusted ones.

The practical approach for serious searchers: submit to both Jito and bloXroute in parallel. The marginal cost is negligible; the improvement in inclusion rate is not.

Stake-weighted QoS (SWQoS)

Solana's Stake-Weighted Quality of Service mechanism gives transactions that arrive from or through highly-staked nodes priority treatment during congestion. In plain terms: a transaction forwarded by a validator with large stake is less likely to be dropped when the network is full. RPC providers that operate their own staked validators—or that have formal relationships with staked nodes—can forward your transactions with this priority advantage built in.

| Submission path | Best for | Key advantage |

|---|---|---|

| Jito bundle engine | All MEV strategies | Guaranteed order execution, ~92% validator coverage |

| Jito ShredStream | Sub-slot arbitrage, sniping | Early shred access—50-150ms before gossip |

| bloxRoute BDN | Global coverage, leader diversity | Dynamic leader scoring, multi-path routing |

| SWQoS (staked forwarding) | High-congestion periods | Priority during network saturation |

| Parallel multi-relay | Production MEV bots | Maximum inclusion rate across all paths |

Layer 5: monitoring, failover, and operational reliability

A competitive MEV setup that goes down for five minutes during a volatile market event loses more than a month of infrastructure costs. Operational reliability is not a secondary concern—it's a direct determinant of profitability.

- Latency monitoring per method—track p50, p95, and p99 separately; average latency hides tail behavior that kills MEV bots

- Slot lag alerts—if your RPC node falls more than 1–2 slots behind the cluster, your state data is stale and your opportunities are priced wrong

- Automated failover—sub-50ms switchover to a backup endpoint when the primary node degrades; this requires pre-warmed connections, not cold start

- Bundle success rate tracking—monitor acceptance rate per relay to detect when a submission path degrades

- Leader schedule awareness—your bot should know upcoming slot leaders and adjust submission routing before the slot changes, not after

- Jito tip optimization—arbitrage bots on Solana typically pay 50–60% of profits in validator tips; calibrating this dynamically based on competition level significantly impacts net margins

Infrastructure stack summary

| Layer | Component | What it does for MEV |

|---|---|---|

| Hardware | Bare-metal EPYC servers | Eliminates noisy-neighbor latency spikes, consistent p99 |

| Geography | Colocation near high-stake validators | Reduces submission-to-inclusion time by 5–10× |

| Data streaming | Yellowstone gRPC / Geyser | Sub-50ms state updates directly from validator memory |

| Shred access | Jito ShredStream | 50–150ms ahead of gossip propagation |

| Bundle submission | Jito block engine | Guaranteed order execution, ~92% validator coverage |

| Relay diversity | bloxRoute BDN | Leader-aware routing, global coverage for edge cases |

| Priority forwarding | SWQoS via staked nodes | Transaction priority during network congestion |

| Monitoring | p99 latency + slot lag alerts | Catch degradation before it costs you a missed slot |

Building this yourself vs. using managed infrastructure

Every layer described above can be assembled independently. You can rent colocation space, provision bare-metal EPYC servers, configure Yellowstone gRPC, set up Jito ShredStream, run parallel relay submission, and build your own monitoring stack. Teams do this. It takes months to tune correctly, requires 24/7 operational attention, and the costs compound quickly.

The alternative—and the reason managed MEV infrastructure exists—is that the infrastructure itself is a commodity. The alpha is in your strategy. Every hour your team spends debugging slot lag, configuring relay failover, or firefighting a missed ShredStream connection is an hour not spent on the logic that actually generates profit.

At RPC Fast (built by Dysnix), we run the full MEV stack for trading teams on Solana—bare-metal EPYC infrastructure, Jito and bloXroute integration, Yellowstone gRPC feeds, SWQoS-enabled submission, and 24/7 monitoring with sub-50ms automated failover. We've tuned over 100 bots in production and our clients consistently report 40% latency reductions from their previous setup.

If you're serious about MEV on Solana, talk to us before you spend three months building infra.

.jpg)