Congestion on Solana does not break the fee market. It breaks delivery. When the network experiences unprecedented volume, the bottleneck shifts from on-chain execution to the networking layer. If your packets never reach the leader’s Transaction Processing Unit (TPU), your priority fee—no matter how high—is irrelevant.

For CTOs and lead engineers in HFT and DeFi, landing transactions is a game of probability. To win, you must move beyond standard RPC calls and master the infrastructure-level levers that govern how transactions are admitted, scheduled, and executed.

The anatomy of a dropped transaction

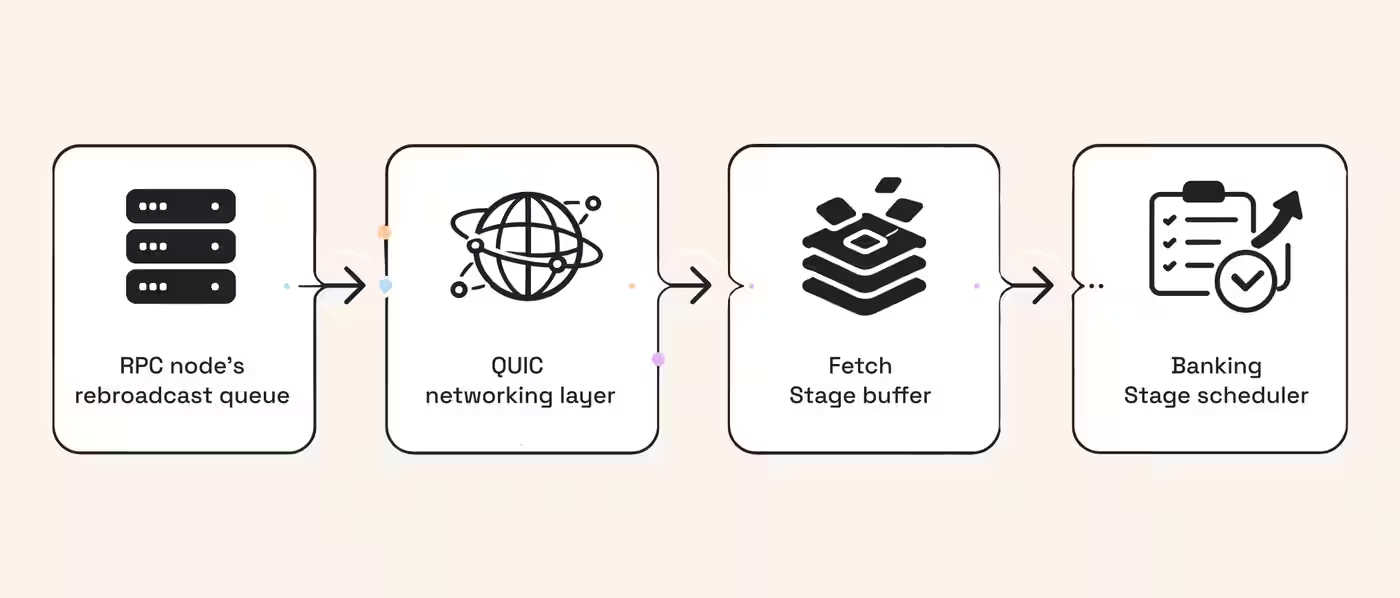

The drop problem runs deeper than any single layer. A transaction travels through at least four distinct failure surfaces before it lands: the RPC node's rebroadcast queue, the QUIC networking layer between the RPC and the leader's TPU, the TPU's own Fetch Stage buffer, and finally the Banking Stage scheduler.

At the RPC layer, if the outstanding rebroadcast queue exceeds 10,000 transactions, newly submitted ones are silently dropped before they ever touch the leader. At the QUIC layer, validators rate-limit connections by stake weight, so unstaked RPC traffic is the first to be shed under load. Even if your packet reaches the Fetch Stage, the TPU processes transactions in five sequential phases (Fetch, SigVerify, Banking, PoH recording, Broadcast), and any bottleneck in that pipeline causes backpressure that results in drops upstream.

Solana docs say RPC rebroadcast isn't suited for time-sensitive apps, making owning your retry loop essential in HFT and DeFi.

So let’s take a look at one layer at a time, among the other details, to have a deeper understanding of the infrastructure's role in this transaction journey.

Network drops

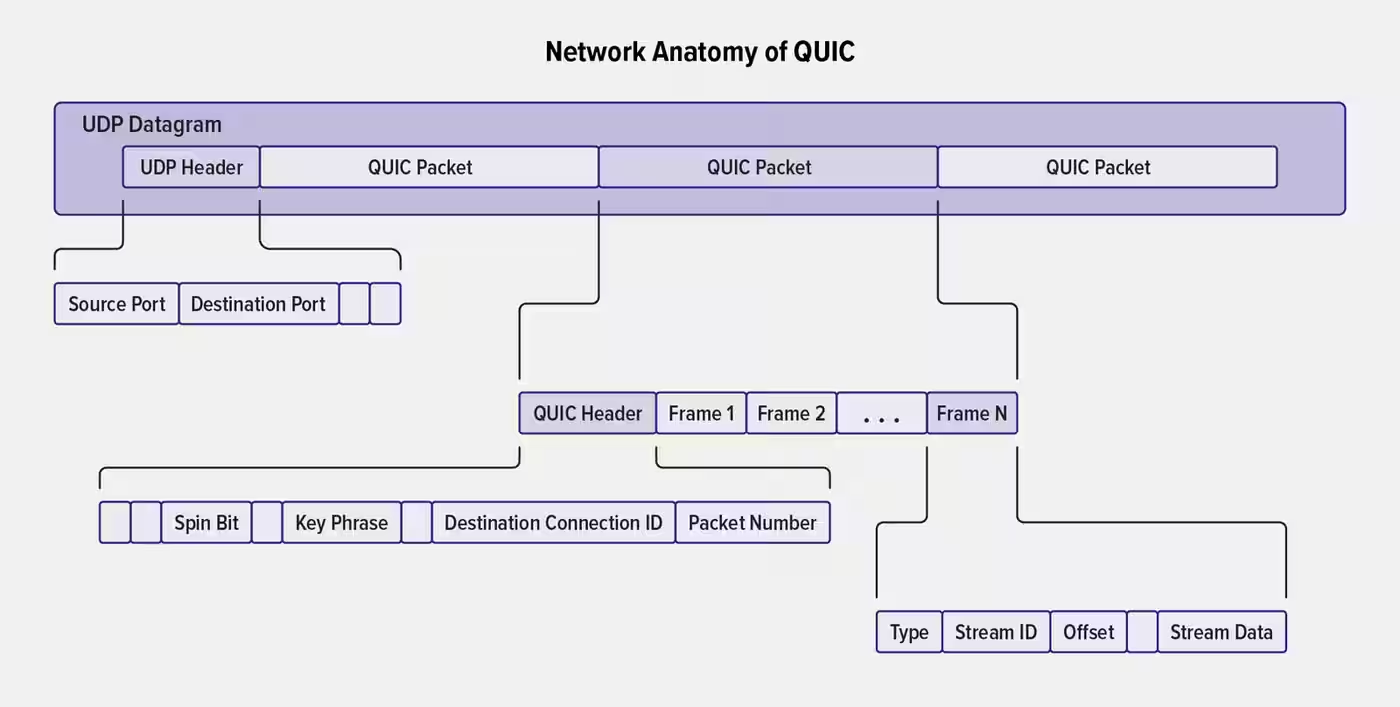

Solana uses QUIC for its networking layer. While QUIC provides better congestion control than UDP, validators still have limited bandwidth.

When the fetch stage of the TPU is overwhelmed, it forwards unprocessed packets to the next leader. If the forward queue exceeds 10,000 transactions, new submissions are dropped before they are even seen by the runtime.

Stale blockhashes

A blockhash expires after 151 slots (roughly 60 seconds).

A “blockhash” is the last Proof of History hash for a “slot.” Since Solana uses PoH as a trusted clock, a transaction's recent blockhash serves as a timestamp.

If your RPC node lags behind the cluster head, you may sign a transaction with a blockhash the leader already considers too old. This is common in shared RPC clusters that trail the leader by 2–5 slots, leading to immediate rejection by the validator.

Compute starvation

Block capacity on Solana is bounded by compute units (CUs). Solana’s current block limit is 60 million CUs, and core contributors are working toward 100 million CUs per block (SIMD-286) to reduce peak-time congestion.

When you hit hot writable accounts (popular pools, markets, liquidation vaults), you also run into scheduler and account-lock contention: your transaction stays valid, but it competes for limited per-slot compute and for access to the same locked state.

In practice, “compute starvation” shows up as non-inclusion across multiple slots until you refresh the blockhash and resubmit with a more competitive fee and routing strategy, because the leader keeps selecting transactions that fit and pay better under the current CU budget.

The transition: From network constraints to architectural control

Identifying these bottlenecks—network drops, stale hashes, and compute limits—is only half the battle for a lead engineer. The real challenge is moving from a "best-effort" submission model to a deterministic execution strategy. On Solana, you cannot simply "pay more" to bypass a full network buffer; you must architect your way into the leader’s TPU by leveraging the specific admission and execution layers the protocol provides.

To turn the tide during peak congestion, you need to pull two primary levers: Admission and Execution. The admission layer, governed by Stake-Weighted Quality of Service (SWQoS), determines if your packets even reach the validator. The execution layer, governed by compute units and priority fees, determines if they are scheduled once they arrive.

By mastering both, you move your transaction from the "unstaked" noise into the priority lane.

SWQoS: The admission layer

SWQoS is the most critical concept for reliable delivery. Because Solana is a Proof of Stake network, validators with more delegated SOL are allocated more bandwidth to the leader.

| Feature | SWQoS | Priority fees |

|---|---|---|

| Function | Controls access to the leader | Controls ordering inside the block |

| Impact | Reduces packet loss | Reduces execution delay |

| Requirement | Staked validator peering | Micro-lamports per CU |

Unstaked traffic is aggressively rate-limited during spikes. RPC nodes are technically unstaked, but they can gain "virtual stake" by peering with a staked validator. This creates a priority lane for your packets.

At RPC Fast, we partnered with bloXroute to bring SWQoS and ensure your transactions reach the leader, even when the public gossip network is saturated.

The execution layer: Compute units and fees

Once your transaction reaches the leader, it enters the Banking Stage. Here, the scheduler assigns transactions to threads. If two transactions target the same writable account, they cannot run in parallel. To optimize for inclusion:

- Scope your CU: Requesting the default 200k CU for a 50k CU transaction makes scheduling problematic. Use the

ComputeBudgetinstruction to set a tight limit. - Implement priority fees: These are priced in micro-lamports per CU. While they don't guarantee same-slot execution, they increase your preference in the scheduler.

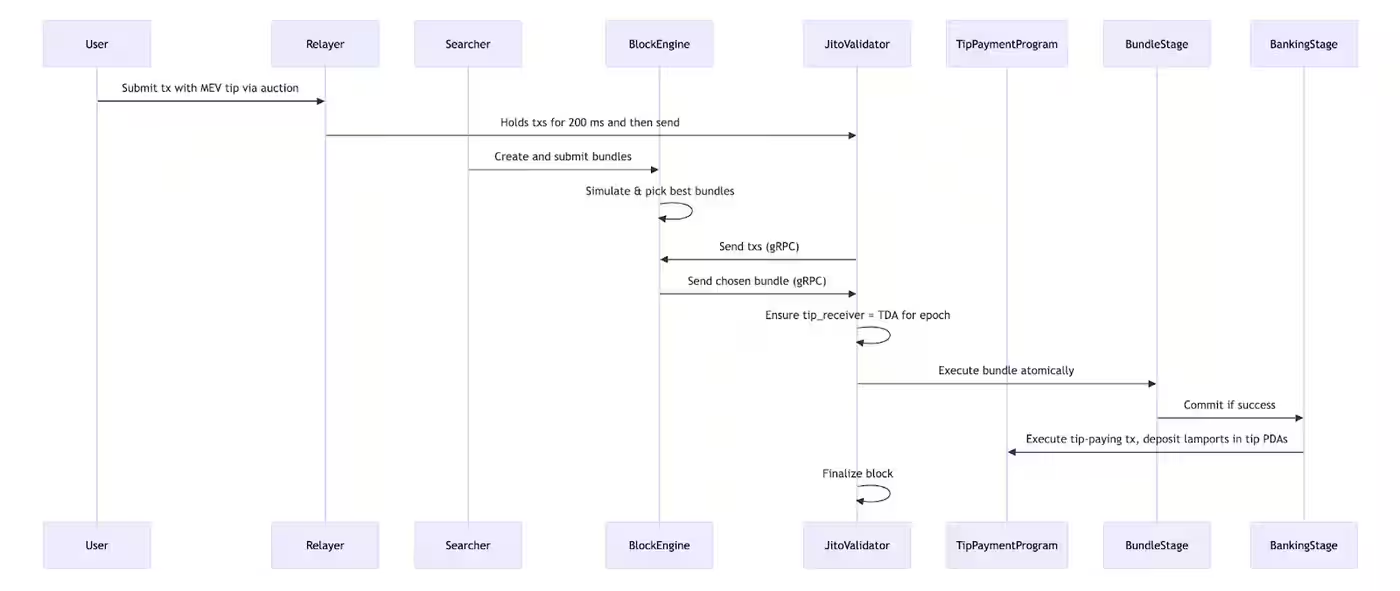

- Use Jito bundles: For atomic multi-step trades, Jito allows you to bundle transactions and tip the validator for guaranteed inclusion.

Multi-path submission via Solana Trader API

Relying on a single submission path is a single point of failure. RPC Fast integrates the bloXroute Trader API directly into the standard sendTransaction method. This allows you to choose your execution strategy via query parameters.

By adding blxr_enable=1 to your request, you can access specialized submission modes:

- Fastest: Routes through staked nodes for maximum propagation speed.

- MEV Protect: Routes through Jito for front-running protection at the cost of slight latency.

- Balanced: A midpoint between speed and protection.

For SaaS users, a tip_amount (minimum 1,000,000 Lamports) is required to ensure high-quality traffic that validators won't rate-limit. Dedicated node users can embed tip instructions directly in the transaction body. See the Trader API documentation for implementation details.

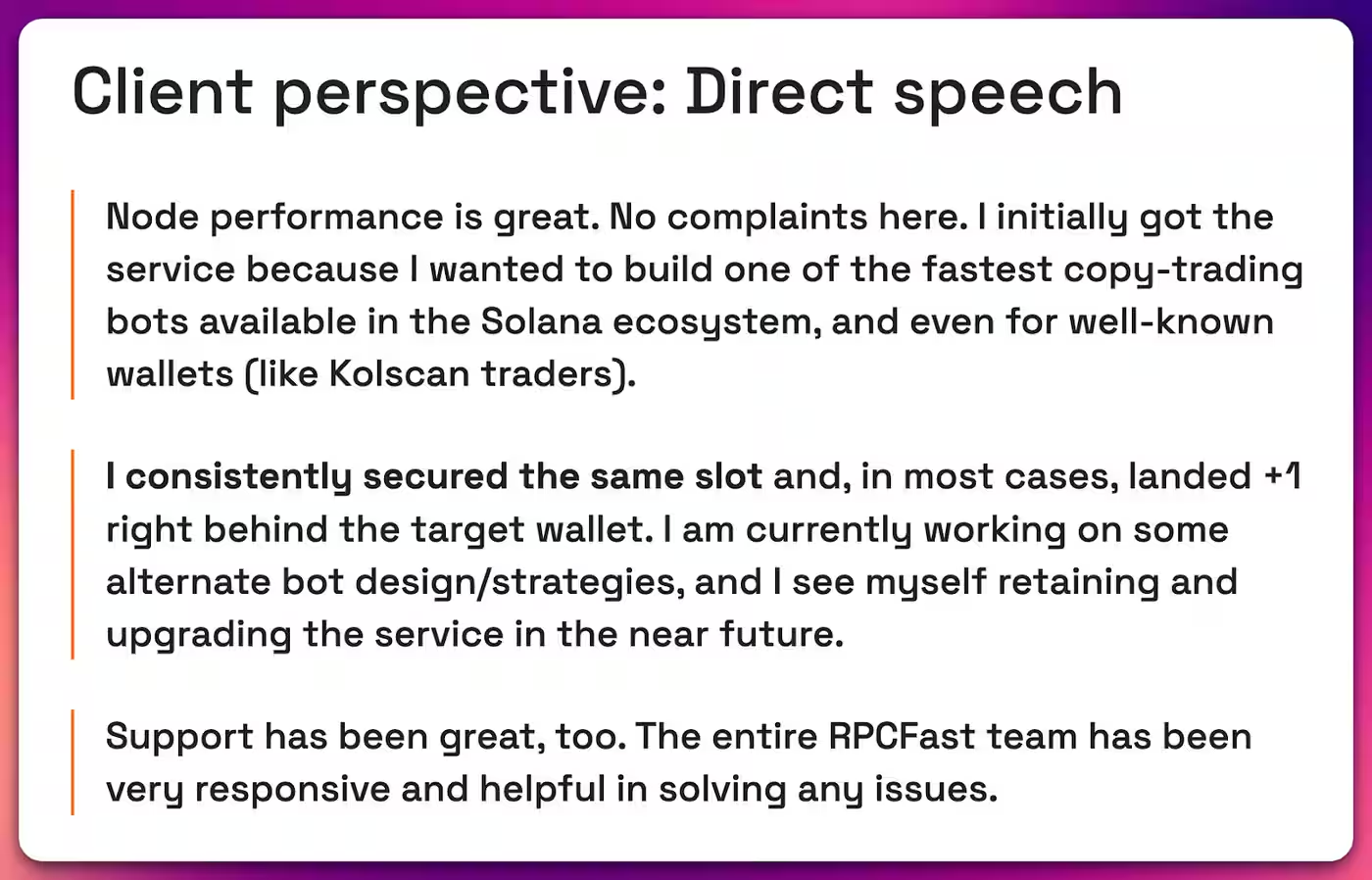

15ms landing for copy-trading? RPC Fast case study

We recently helped a client build a Rust-based copy-trading bot designed to outperform retail platforms. By co-locating their VPS with an RPC Fast dedicated node in Frankfurt and utilizing Yellowstone gRPC for shred-level parsing, they achieved:

- Lowest recorded landing: 15ms.

- Typical landing: 200–300ms (usually within 1 slot).

- Consistency: Landing in the same slot or +1 slot immediately following the target wallet.

The bot used a multi-sender strategy, rotating API keys across Helius, bloXroute, and QuickNode to bypass rate limits and ensure path diversity. Read the full HFT case study here.

The strategic pivot: From best-effort to guaranteed execution

Most engineering teams treat the RPC as a black box. They send a sendTransaction request and wait for a signature. However, during congestion, the "Time to Inclusion" (TTI) spikes because shared RPCs lack the stake-weight to force packets through the QUIC floodgates. If your competitor is using a dedicated node with staked identity, they aren't just faster; they are the only ones getting into the block.

Infrastructure-as-Alpha principle

To achieve the 15ms landing times seen in our HFT case studies, you must treat your infrastructure as part of your trading strategy. This involves three specific shifts in thinking:

- Path redundancy is mandatory

Relying on one RPC provider is a single point of failure. High-load systems must use a "Shotgun" approach—broadcasting the same transaction through multiple high-stake paths (e.g., RPC Fast + bloXroute + Jito) simultaneously. The first path to reach the leader wins; the rest are naturally deduplicated by the validator.

- The "tight CU" advantage

The Solana scheduler is a bin-packing algorithm. A transaction requesting 1.4M CU is harder to fit into a nearly full block than four transactions requesting 300k CU each. By programmatically optimizing your ComputeBudget instructions, you increase the number of "slots" where your transaction is a viable candidate for inclusion.

- Predictive rebroadcasting

Instead of waiting for the 60-second blockhash expiry, sophisticated bots poll the getSignatureStatuses every 200ms. If the transaction isn't "processed" within 2 slots, they rebroadcast immediately with a slightly higher priority fee. This "aggressive retry" loop ensures you don't sit in a dead queue while the market moves against you.

The RPC Fast checklist for engineers

To maximize your landing rate, follow these battle-tested rules:

- Fetch blockhashes with "confirmed" commitment: This avoids using blockhashes from dropped forks.

- Set

maxRetriesto 0: Own your retry logic. Poll for transaction status and rebroadcast manually every 2 seconds until confirmed or expired. - Skip preflight for HFT: If your signatures are verified and your logic is sound,

skipPreflight: truesaves critical milliseconds. - Optimize CU budget: Smaller transactions are easier to fit into a nearly full block.

- Use staked connections: If your PnL depends on same-slot execution, unstaked RPCs are a liability.

Power up your TX landing techniques

Landing transactions on Solana is no longer about just sending a JSON-RPC request. It requires a deep integration with the network's stake-weighted architecture and specialized routing APIs.

By combining SWQoS access with optimized compute usage and multi-path submission, you can turn network congestion from a threat into a competitive advantage.

If your current infra is dropping TXs or trailing the leader, audit your stack with us.

Request infra review

.jpg)

.jpg)