Every second your app polls getAccountInfo, you burn rate limit budget, add round-trip latency, and miss events that happen between requests. On a chain processing over 5,000 TPS at peak with 400ms block times, polling is not a data strategy. It is a liability.

This article maps the full Solana streaming stack: what each layer does, where it breaks, and how to choose the right model for your workload.

At the end, we cover how RPC Fast packages this stack in its Solana RPC SaaS offering.

What "data streaming" means on Solana

Streaming replaces repeated HTTP requests with a persistent connection. Your node pushes state deltas to your application as they occur. You react instead of poll.

Streaming does not replace HTTP RPC. It complements it.

| Dimension | HTTP polling | Streaming |

|---|---|---|

| Update model | pull | push |

| Latency | higher | near real-time |

| RPC load | higher | lower |

| Best for | historical reads, lookups | live deltas, reactive state |

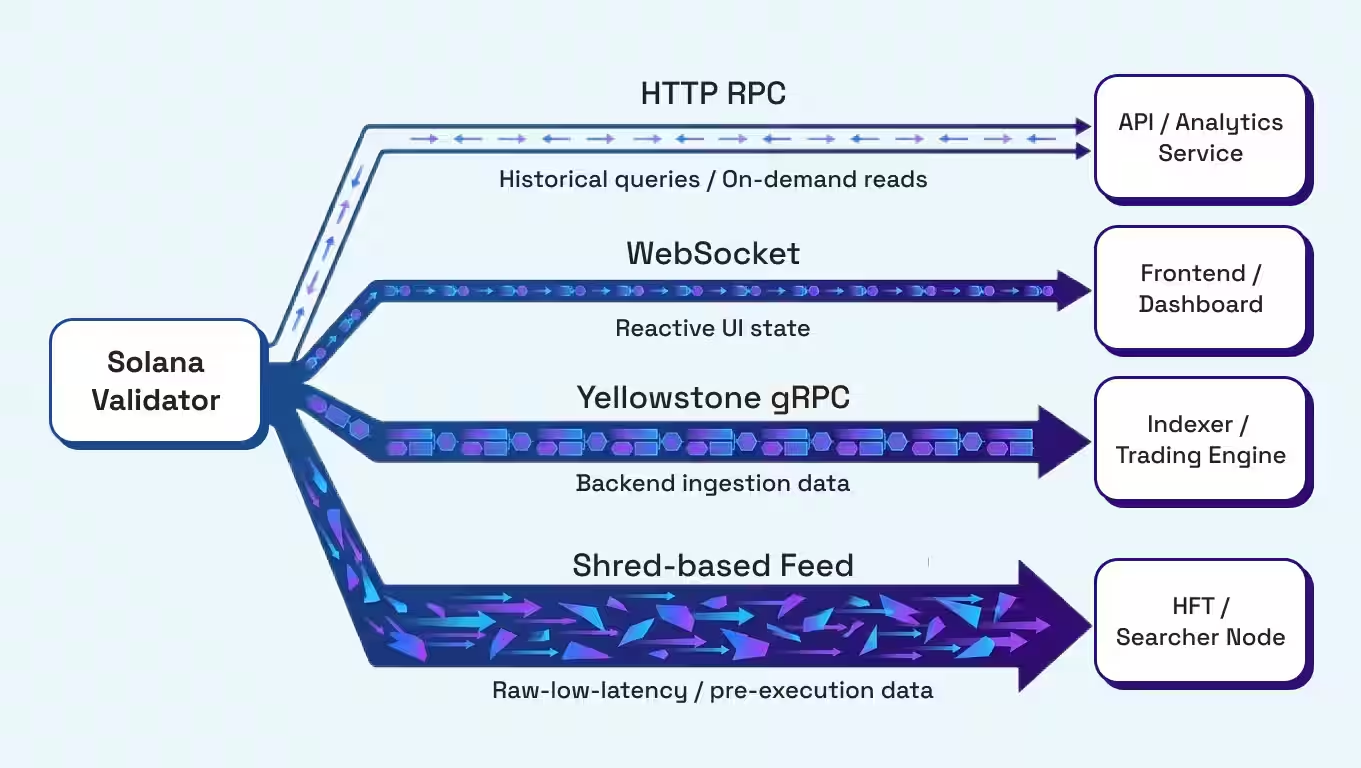

Most production systems run both: HTTP for historical queries and on-demand reads, and streaming for live state.

The two streaming paths Solana builders use

Solana gives you two native streaming mechanisms. Combining them or choosing solo variants is an architectural decision you make early, and it shapes your entire data pipeline.

Path 1: JSON-RPC WebSocket PubSub

The official Solana RPC interface includes a PubSub system over WebSockets. You open a persistent connection, send a subscription request, and receive push notifications when matching events occur. This setup is best for wallets, dashboards, moderate bots, and reactive UIs.

Supported subscription types:

accountSubscribe—account lamports or data changeprogramSubscribe—accounts owned by a program changelogsSubscribe—program log outputsignatureSubscribe—transaction confirmation statusslotSubscribe—new slot processedblockSubscribe—block data (if enabled)rootSubscribe—finalized root changes

WebSocket PubSub: Quick start

Here is the baseline implementation for account subscription. This is the entry point for most teams.

This works well for wallets, dashboards, and moderate bots. It starts to break under load.

Where WebSockets stop scaling

JSON overhead accumulates fast at high event volume. Subscription fan-out becomes expensive. Reconnect logic gets complex. And when your consumer falls behind, you face a backpressure problem with no built-in flow control.

Specific failure patterns teams hit in production:

- Slot race conditions when processing events out of order

- Dropped logs during burst periods

- Duplicate processing on reconnect without idempotent handlers

- Subscription explosion when monitoring hundreds of accounts

When you hit these, the answer is not tuning WebSocket parameters. It is moving to gRPC.

Path 2: gRPC via Geyser plugins

Solana validators load Geyser plugins that expose on-chain events at the validator runtime level. Clients connect via gRPC (binary Protobuf over HTTP/2) and receive structured streams of accounts, transactions, blocks, slots, and votes.

Best for: indexers, analytics pipelines, MEV/searchers, HFT backends.

| Feature | WebSocket RPC | Yellowstone gRPC (Geyser) |

|---|---|---|

| Protocol | JSON over WebSocket | gRPC (Protobuf over HTTP/2) |

| Latency | low | minimal / very low |

| Throughput | moderate | maximum / high |

| Filtering | basic | advanced (subscription filters) |

| Best fit | UI, moderate bots | ingestion engines, trading systems |

Yellowstone gRPC: Validator-level streaming

Yellowstone gRPC is the standard interface for high-throughput Solana streaming. It taps directly into the Geyser plugin system, delivering binary Protobuf streams over HTTP/2 with advanced filtering and strong typing.

Data types streamed: account updates, transactions, entries, block notifications, slot notifications.

Test your endpoint first (GetSlot smoke test):

Subscribe to transactions with the account filter and processed commitment:

Proto files you need:

Client examples in Go, Rust, and TypeScript:

Commitment levels

Every subscription carries a commitment level. This is not a cosmetic setting. It determines how final a piece of data must be before it triggers your handler.

| Commitment | Practical meaning | Use |

|---|---|---|

processed |

fastest signal, not yet voted | trading reactions, alerts |

confirmed |

voted by supermajority | UX updates, bots |

finalized |

irreversible | accounting, indexing checkpoints |

A common pattern that works in production: act on processed for speed, reconcile on confirmed or finalized for correctness. Mixing them without intent is how teams end up showing phantom state in their UI or double-processing transactions in their indexer.

The lowest layer: Raw shreds and pre-execution feeds

Geyser streams data after execution. If you need data earlier, you go to the shred layer.

Shreds are the raw block fragments broadcast by leaders before a block is fully assembled. Accessing the shred stream gives you the earliest possible signal from the validator pipeline.

The tradeoff is explicit: shred-derived feeds do not include the transaction execution layer. The data is incomplete compared to a full execution-aware stream.

| Feed type | Execution layer | Data completeness | Best fit |

|---|---|---|---|

| Yellowstone gRPC | included | complete stream | transaction ingestion, indexing |

| Shred-derived (Aperture) | not included | incomplete vs Yellowstone | earliest signal, HFT/searchers |

This is the right tradeoff for teams where the earliest possible signal matters more than completeness, and where they reconcile against a full execution stream separately.

The production architecture that survives the mainnet

The hybrid model is what teams actually run in production:

- HTTP RPC—for historical queries and on-demand reads;

- WebSocket—for reactive UI state;

- gRPC—for backend ingestion engines;

- Shred-based feed—for ultra-low-latency strategies where incomplete data is acceptable.

Operational patterns that matter:

- Backpressure: Use bounded queues and worker pools. Decide explicitly whether to drop or replay events when your consumer falls behind.

- Reconnect strategy: Implement automatic reconnect with subscription replay. Handlers must be idempotent. Re-sync state via HTTP RPC on reconnect to close any gap.

- Filtering: Subscribe narrow first. Use

account_includeand program filters to reduce noise. Expand only when you have the consumer capacity to handle it. - Commitment separation: Use

processedfor your trading reaction loop. Useconfirmedorfinalizedfor your state reconciliation path. Do not mix them in the same handler.

The managed RPC Fast Solana RPC SaaS

Most teams do not want to assemble this stack themselves. RPC Fast now offers Solana RPC SaaS: a managed Solana RPC and streaming stack built on production-grade infrastructure.

What it includes:

- Standard JSON-RPC endpoint with 100% healthy dedicated nodes

- WebSocket PubSub subscriptions

- Yellowstone gRPC streaming (same interface as dedicated nodes, different packaging by plan)

- Shredstream gRPC

- Aperture gRPC (Beta)—shred-derived streaming with Yellowstone-compatible interface

- DoubleZero connectivity—a high-throughput dedicated internet layer built for Solana

- SWQoS partner routing for higher landing rates

- Production-grade networking, observability, and failover

The Yellowstone gRPC interface is consistent across dedicated nodes and SaaS plans. What changes is packaging: dedicated nodes give you the endpoint by default, SaaS plans gate it by tier with concurrent stream limits.

What you get per plan

Interface stays consistent across tiers. Quotas differ by plan.

Which streaming model should you use?

| Dimension | WebSocket PubSub | Yellowstone gRPC | Shred-based (Aperture) |

|---|---|---|---|

| Latency | low | very low | earliest |

| Throughput | moderate | high | high |

| Execution-aware | yes | yes | no |

| Data completeness | complete | complete | incomplete |

| Complexity | low | medium | advanced |

| Best for | UI, wallets, moderate bots | indexers, analytics, trading backends | HFT, searchers, pre-execution strategies |

Streaming is not optional on Solana

If your application reacts to the chain state, polling alone is the wrong model. The question is which streaming layer fits your latency budget, throughput requirements, and operational complexity tolerance.

WebSocket PubSub gets you started. Yellowstone gRPC scales your backend. Shred-based feeds push your latency floor as low as the network allows.

We are proud to launch the RPC Fast Solana RPC SaaS, bringing this entire high-performance stack into a single, managed environment.

By integrating standard JSON-RPC with advanced Yellowstone and Aperture gRPC streaming, we provide the infrastructure flexibility required to scale from simple dApps to complex HFT engines. Our goal is to eliminate the operational overhead of managing validator-level data feeds so your team can focus entirely on your goals.

Need to evaluate the right streaming topology for your workload or get access to Aperture gRPC Beta?

Request an architecture review

.jpg)

.jpg)